Track notebooks, scripts & functions¶

For tracking pipelines, see: Pipelines – workflow managers.

# pip install 'lamindb[jupyter]'

!lamin init --storage ./test-track

Show code cell output

→ initialized lamindb: testuser1/test-track

Track a notebook or script¶

Call track() to register your notebook or script as a transform and start capturing inputs & outputs of a run.

import lamindb as ln

ln.track() # initiate a tracked notebook/script run

# your code automatically tracks inputs & outputs

ln.finish() # mark run as finished, save execution report, source code & environment

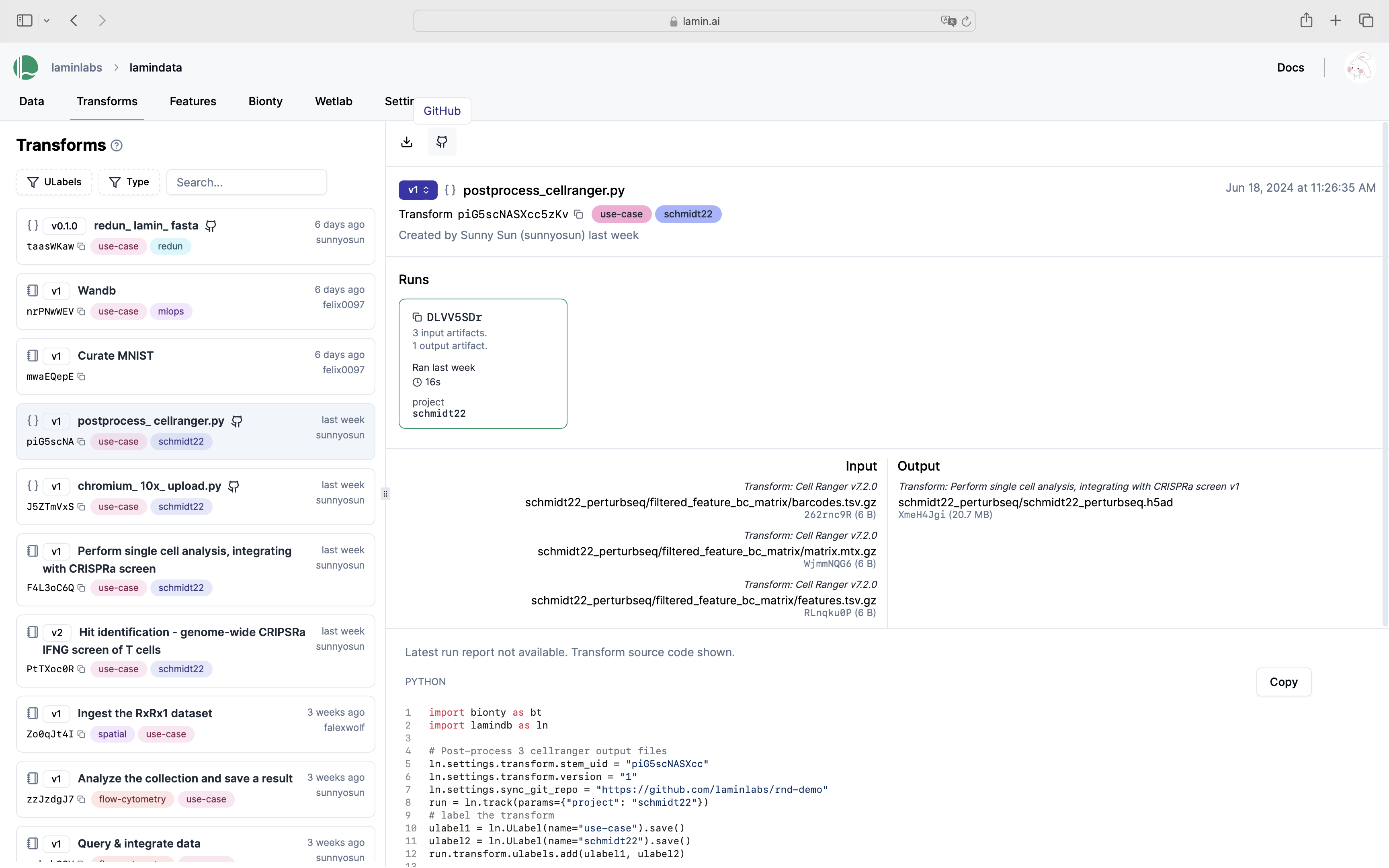

Here is how a notebook with run report looks on the hub.

Explore it here.

Assign a uid to a notebook or script as you would for an ELN.

If you want to retain one version history when renaming notebooks and scripts, pass a uid to ln.track(), e.g.

ln.track("9priar0hoE5u0000")

To obtain a uid value, copy it from the logging statement (Transform('9priar0hoE5u0000')) when running ln.track() a first time without passing a uid.

You find your notebooks and scripts in the Transform registry (along with pipelines & functions). Run stores executions.

You can use all usual ways of querying to obtain one or multiple transform records, e.g.:

transform = ln.Transform.get(key="my_analyses/my_notebook.ipynb")

transform.source_code # source code

transform.runs # all runs

transform.latest_run.report # report of latest run

transform.latest_run.environment # environment of latest run

To load a notebook or script from the hub, search or filter the transform page and use the CLI.

lamin load https://lamin.ai/laminlabs/lamindata/transform/13VINnFk89PE

Track projects¶

You can link the entities created during a run to a project.

import lamindb as ln

my_project = ln.Project(name="My project").save() # create a project

ln.track(project="My project") # auto-link entities to "My project"

ln.Artifact(ln.core.datasets.file_fcs(), key="my_file.fcs").save() # save an artifact

Show code cell output

→ connected lamindb: testuser1/test-track

→ created Transform('SofKDGEYF6PJ0000'), started new Run('G38x79yw...') at 2025-03-10 11:51:03 UTC

→ notebook imports: lamindb==1.2.0

Artifact(uid='3ygfYgnlwhqI49dy0000', is_latest=True, key='my_file.fcs', suffix='.fcs', size=19330507, hash='rCPvmZB19xs4zHZ7p_-Wrg', space_id=1, storage_id=1, run_id=1, created_by_id=1, created_at=2025-03-10 11:51:06 UTC)

Filter entities by project.

ln.Artifact.filter(projects=my_project).df() # for example, filter artifacts

Show code cell output

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 1 | 3ygfYgnlwhqI49dy0000 | my_file.fcs | None | .fcs | None | None | 19330507 | rCPvmZB19xs4zHZ7p_-Wrg | None | None | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:06.656000+00:00 | 1 | None | 1 |

Access all entities linked to a project.

display(my_project.artifacts.df())

display(my_project.transforms.df())

display(my_project.runs.df())

Show code cell output

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 1 | 3ygfYgnlwhqI49dy0000 | my_file.fcs | None | .fcs | None | None | 19330507 | rCPvmZB19xs4zHZ7p_-Wrg | None | None | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:06.656000+00:00 | 1 | None | 1 |

| uid | key | description | type | source_code | hash | reference | reference_type | space_id | _template_id | version | is_latest | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||

| 1 | SofKDGEYF6PJ0000 | track.ipynb | Track notebooks, scripts & functions | notebook | None | None | None | None | 1 | None | None | True | 2025-03-10 11:51:03.390000+00:00 | 1 | None | 1 |

| uid | name | started_at | finished_at | reference | reference_type | _is_consecutive | _status_code | space_id | transform_id | report_id | _logfile_id | environment_id | initiated_by_run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||||

| 1 | G38x79ywBD5xrym6viZg | None | 2025-03-10 11:51:03.401601+00:00 | None | None | None | None | 0 | 1 | 1 | None | None | None | None | 2025-03-10 11:51:03.402000+00:00 | 1 | None | 1 |

Track parameters¶

In addition to tracking source code, run reports & environments, you can track run parameters.

Track run parameters¶

First, define valid parameters, e.g.:

ln.Param(name="input_dir", dtype=str).save()

ln.Param(name="learning_rate", dtype=float).save()

ln.Param(name="preprocess_params", dtype="dict").save()

Show code cell output

Param(name='preprocess_params', dtype='dict', space_id=1, created_by_id=1, run_id=1, created_at=2025-03-10 11:51:06 UTC)

If you hadn’t defined these parameters, you’d get a ValidationError in the following script.

import argparse

import lamindb as ln

if __name__ == "__main__":

p = argparse.ArgumentParser()

p.add_argument("--input-dir", type=str)

p.add_argument("--downsample", action="store_true")

p.add_argument("--learning-rate", type=float)

args = p.parse_args()

params = {

"input_dir": args.input_dir,

"learning_rate": args.learning_rate,

"preprocess_params": {

"downsample": args.downsample, # nested parameter names & values in dictionaries are not validated

"normalization": "the_good_one",

},

}

ln.track(params=params)

# your code

ln.finish()

Run the script.

!python scripts/run-track-with-params.py --input-dir ./mydataset --learning-rate 0.01 --downsample

Show code cell output

→ connected lamindb: testuser1/test-track

→ created Transform('aosEGfdSQlyn0000'), started new Run('e3pw2GIA...') at 2025-03-10 11:51:10 UTC

→ params: input_dir=./mydataset, learning_rate=0.01, preprocess_params={'downsample': True, 'normalization': 'the_good_one'}

→ finished Run('e3pw2GIA') after 1s at 2025-03-10 11:51:12 UTC

Query by run parameters¶

Query for all runs that match a certain parameters:

ln.Run.params.filter(

learning_rate=0.01, input_dir="./mydataset", preprocess_params__downsample=True

).df()

Show code cell output

| uid | name | started_at | finished_at | reference | reference_type | _is_consecutive | _status_code | space_id | transform_id | report_id | _logfile_id | environment_id | initiated_by_run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||||

| 2 | e3pw2GIAh9LPbuqX9Ssb | None | 2025-03-10 11:51:10.638584+00:00 | 2025-03-10 11:51:12.129604+00:00 | None | None | True | 0 | 1 | 2 | 3 | None | 2 | None | 2025-03-10 11:51:10.639000+00:00 | 1 | None | 1 |

Note that:

preprocess_params__downsample=Truetraverses the dictionarypreprocess_paramsto find the key"downsample"and match it toTruenested keys like

"downsample"in a dictionary do not appear inParamand hence, do not get validated

Access parameters of a run¶

Below is how you get the parameter values that were used for a given run.

run = ln.Run.params.filter(learning_rate=0.01).order_by("-started_at").first()

run.params.get_values()

Show code cell output

{'input_dir': './mydataset',

'learning_rate': 0.01,

'preprocess_params': {'downsample': True, 'normalization': 'the_good_one'}}

Here is how it looks on the hub.

Explore all parameter values¶

If you want to query all parameter values across all runs, use ParamValue.

ln.models.ParamValue.df(include=["param__name", "created_by__handle"])

Show code cell output

| value | hash | space_id | param__name | created_by__handle | |

|---|---|---|---|---|---|

| id | |||||

| 1 | ./mydataset | None | 1 | input_dir | testuser1 |

| 2 | 0.01 | None | 1 | learning_rate | testuser1 |

| 3 | {'downsample': True, 'normalization': 'the_goo... | None | 1 | preprocess_params | testuser1 |

Show code cell content

assert run.params.get_values() == {

"input_dir": "./mydataset",

"learning_rate": 0.01,

"preprocess_params": {"downsample": True, "normalization": "the_good_one"},

}

Track functions¶

If you want more-fined-grained data lineage tracking, use the tracked() decorator.

In a notebook¶

ln.Param(name="subset_rows", dtype="int").save() # define parameters

ln.Param(name="subset_cols", dtype="int").save()

ln.Param(name="input_artifact_key", dtype="str").save()

ln.Param(name="output_artifact_key", dtype="str").save()

Param(name='output_artifact_key', dtype='str', space_id=1, created_by_id=1, run_id=1, created_at=2025-03-10 11:51:13 UTC)

Define a function and decorate it with tracked():

@ln.tracked()

def subset_dataframe(

input_artifact_key: str,

output_artifact_key: str,

subset_rows: int = 2,

subset_cols: int = 2,

) -> None:

artifact = ln.Artifact.get(key=input_artifact_key)

dataset = artifact.load()

new_data = dataset.iloc[:subset_rows, :subset_cols]

ln.Artifact.from_df(new_data, key=output_artifact_key).save()

Prepare a test dataset:

df = ln.core.datasets.small_dataset1(otype="DataFrame")

input_artifact_key = "my_analysis/dataset.parquet"

artifact = ln.Artifact.from_df(df, key=input_artifact_key).save()

Run the function with default params:

ouput_artifact_key = input_artifact_key.replace(".parquet", "_subsetted.parquet")

subset_dataframe(input_artifact_key, ouput_artifact_key)

Query for the output:

subsetted_artifact = ln.Artifact.get(key=ouput_artifact_key)

subsetted_artifact.view_lineage()

This is the run that created the subsetted_artifact:

subsetted_artifact.run

Run(uid='KUBeT9tGm256XqCsiFmh', started_at=2025-03-10 11:51:13 UTC, finished_at=2025-03-10 11:51:13 UTC, space_id=1, transform_id=3, created_by_id=1, initiated_by_run_id=1, created_at=2025-03-10 11:51:13 UTC)

This is the function that created it:

subsetted_artifact.run.transform

Transform(uid='sHWJJ5P8vZQc0000', is_latest=True, key='track.ipynb/subset_dataframe.py', type='function', hash='F_wwrfFs6zmzMGVilG2Prg', space_id=1, created_by_id=1, created_at=2025-03-10 11:51:13 UTC)

This is the source code of this function:

subsetted_artifact.run.transform.source_code

'@ln.tracked()\ndef subset_dataframe(\n input_artifact_key: str,\n output_artifact_key: str,\n subset_rows: int = 2,\n subset_cols: int = 2,\n) -> None:\n artifact = ln.Artifact.get(key=input_artifact_key)\n dataset = artifact.load()\n new_data = dataset.iloc[:subset_rows, :subset_cols]\n ln.Artifact.from_df(new_data, key=output_artifact_key).save()\n'

These are all versions of this function:

subsetted_artifact.run.transform.versions.df()

| uid | key | description | type | source_code | hash | reference | reference_type | space_id | _template_id | version | is_latest | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||

| 3 | sHWJJ5P8vZQc0000 | track.ipynb/subset_dataframe.py | None | function | @ln.tracked()\ndef subset_dataframe(\n inpu... | F_wwrfFs6zmzMGVilG2Prg | None | None | 1 | None | None | True | 2025-03-10 11:51:13.591000+00:00 | 1 | None | 1 |

This is the initating run that triggered the function call:

subsetted_artifact.run.initiated_by_run

Run(uid='G38x79ywBD5xrym6viZg', started_at=2025-03-10 11:51:03 UTC, space_id=1, transform_id=1, created_by_id=1, created_at=2025-03-10 11:51:03 UTC)

This is the transform of the initiating run:

subsetted_artifact.run.initiated_by_run.transform

Transform(uid='SofKDGEYF6PJ0000', is_latest=True, key='track.ipynb', description='Track notebooks, scripts & functions', type='notebook', space_id=1, created_by_id=1, created_at=2025-03-10 11:51:03 UTC)

These are the parameters of the run:

subsetted_artifact.run.params.get_values()

{'input_artifact_key': 'my_analysis/dataset.parquet',

'output_artifact_key': 'my_analysis/dataset_subsetted.parquet',

'subset_cols': 2,

'subset_rows': 2}

These input artifacts:

subsetted_artifact.run.input_artifacts.df()

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 4 | zJz1Jh9KjAcC69MG0000 | my_analysis/dataset.parquet | None | .parquet | dataset | DataFrame | 6598 | N8O5K2TP4gL3fk42VYK5aA | None | 3 | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:13.569000+00:00 | 1 | None | 1 |

These are output artifacts:

subsetted_artifact.run.output_artifacts.df()

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 5 | RK9IUO0xw7Zbp8d40000 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3233 | LiK5RaETbDxoDhxEn9X3Vw | None | 2 | md5 | True | False | 1 | 1 | None | None | True | 3 | 2025-03-10 11:51:13.642000+00:00 | 1 | None | 1 |

Re-run the function with a different parameter:

subsetted_artifact = subset_dataframe(

input_artifact_key, ouput_artifact_key, subset_cols=3

)

subsetted_artifact = ln.Artifact.get(key=ouput_artifact_key)

subsetted_artifact.view_lineage()

Show code cell output

→ creating new artifact version for key='my_analysis/dataset_subsetted.parquet' (storage: '/home/runner/work/lamindb/lamindb/docs/test-track')

We created a new run:

subsetted_artifact.run

Run(uid='0ub78nidDwB05BOgFRte', started_at=2025-03-10 11:51:14 UTC, finished_at=2025-03-10 11:51:14 UTC, space_id=1, transform_id=3, created_by_id=1, initiated_by_run_id=1, created_at=2025-03-10 11:51:14 UTC)

With new parameters:

subsetted_artifact.run.params.get_values()

{'input_artifact_key': 'my_analysis/dataset.parquet',

'output_artifact_key': 'my_analysis/dataset_subsetted.parquet',

'subset_cols': 3,

'subset_rows': 2}

And a new version of the output artifact:

subsetted_artifact.run.output_artifacts.df()

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 6 | RK9IUO0xw7Zbp8d40001 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3839 | 4IcM2NblBj81aKsrT_8c1Q | None | 2 | md5 | True | False | 1 | 1 | None | None | True | 4 | 2025-03-10 11:51:14.221000+00:00 | 1 | None | 1 |

See the state of the database:

ln.view()

Show code cell output

Artifact

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 6 | RK9IUO0xw7Zbp8d40001 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3839 | 4IcM2NblBj81aKsrT_8c1Q | None | 2.0 | md5 | True | False | 1 | 1 | None | None | True | 4 | 2025-03-10 11:51:14.221000+00:00 | 1 | None | 1 |

| 5 | RK9IUO0xw7Zbp8d40000 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3233 | LiK5RaETbDxoDhxEn9X3Vw | None | 2.0 | md5 | True | False | 1 | 1 | None | None | False | 3 | 2025-03-10 11:51:13.642000+00:00 | 1 | None | 1 |

| 4 | zJz1Jh9KjAcC69MG0000 | my_analysis/dataset.parquet | None | .parquet | dataset | DataFrame | 6598 | N8O5K2TP4gL3fk42VYK5aA | None | 3.0 | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:13.569000+00:00 | 1 | None | 1 |

| 1 | 3ygfYgnlwhqI49dy0000 | my_file.fcs | None | .fcs | None | None | 19330507 | rCPvmZB19xs4zHZ7p_-Wrg | None | NaN | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:06.656000+00:00 | 1 | None | 1 |

Param

| name | dtype | is_type | _expect_many | space_id | type_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||

| 7 | output_artifact_key | str | None | False | 1 | None | 1 | 2025-03-10 11:51:13.520000+00:00 | 1 | None | 1 |

| 6 | input_artifact_key | str | None | False | 1 | None | 1 | 2025-03-10 11:51:13.514000+00:00 | 1 | None | 1 |

| 5 | subset_cols | int | None | False | 1 | None | 1 | 2025-03-10 11:51:13.507000+00:00 | 1 | None | 1 |

| 4 | subset_rows | int | None | False | 1 | None | 1 | 2025-03-10 11:51:13.501000+00:00 | 1 | None | 1 |

| 3 | preprocess_params | dict | None | False | 1 | None | 1 | 2025-03-10 11:51:06.821000+00:00 | 1 | None | 1 |

| 2 | learning_rate | float | None | False | 1 | None | 1 | 2025-03-10 11:51:06.815000+00:00 | 1 | None | 1 |

| 1 | input_dir | str | None | False | 1 | None | 1 | 2025-03-10 11:51:06.807000+00:00 | 1 | None | 1 |

ParamValue

| value | hash | space_id | param_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|

| id | ||||||||

| 1 | ./mydataset | None | 1 | 1 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 2 | 0.01 | None | 1 | 2 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 3 | {'downsample': True, 'normalization': 'the_goo... | None | 1 | 3 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 4 | my_analysis/dataset.parquet | None | 1 | 6 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 5 | my_analysis/dataset_subsetted.parquet | None | 1 | 7 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 6 | 2 | None | 1 | 4 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 7 | 2 | None | 1 | 5 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

Project

| uid | name | is_type | abbr | url | start_date | end_date | _status_code | space_id | type_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||

| 1 | SqLhYL7wlywS | My project | False | None | None | None | None | 0 | 1 | None | None | 2025-03-10 11:51:02.758000+00:00 | 1 | None | 1 |

Run

| uid | name | started_at | finished_at | reference | reference_type | _is_consecutive | _status_code | space_id | transform_id | report_id | _logfile_id | environment_id | initiated_by_run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||||

| 1 | G38x79ywBD5xrym6viZg | None | 2025-03-10 11:51:03.401601+00:00 | NaT | None | None | None | 0 | 1 | 1 | NaN | None | NaN | NaN | 2025-03-10 11:51:03.402000+00:00 | 1 | None | 1 |

| 2 | e3pw2GIAh9LPbuqX9Ssb | None | 2025-03-10 11:51:10.638584+00:00 | 2025-03-10 11:51:12.129604+00:00 | None | None | True | 0 | 1 | 2 | 3.0 | None | 2.0 | NaN | 2025-03-10 11:51:10.639000+00:00 | 1 | None | 1 |

| 3 | KUBeT9tGm256XqCsiFmh | None | 2025-03-10 11:51:13.597486+00:00 | 2025-03-10 11:51:13.649124+00:00 | None | None | None | 0 | 1 | 3 | NaN | None | NaN | 1.0 | 2025-03-10 11:51:13.598000+00:00 | 1 | None | 1 |

| 4 | 0ub78nidDwB05BOgFRte | None | 2025-03-10 11:51:14.170468+00:00 | 2025-03-10 11:51:14.227337+00:00 | None | None | None | 0 | 1 | 3 | NaN | None | NaN | 1.0 | 2025-03-10 11:51:14.170000+00:00 | 1 | None | 1 |

Storage

| uid | root | description | type | region | instance_uid | space_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||

| 1 | MICS1FRqVOxt | /home/runner/work/lamindb/lamindb/docs/test-track | None | local | None | 73KPGC58ahU9 | 1 | None | 2025-03-10 11:50:58.301000+00:00 | 1 | None | 1 |

Transform

| uid | key | description | type | source_code | hash | reference | reference_type | space_id | _template_id | version | is_latest | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||

| 3 | sHWJJ5P8vZQc0000 | track.ipynb/subset_dataframe.py | None | function | @ln.tracked()\ndef subset_dataframe(\n inpu... | F_wwrfFs6zmzMGVilG2Prg | None | None | 1 | None | None | True | 2025-03-10 11:51:13.591000+00:00 | 1 | None | 1 |

| 2 | aosEGfdSQlyn0000 | run-track-with-params.py | run-track-with-params.py | script | import argparse\nimport lamindb as ln\n\nif __... | nRUs3ZjuVTbKtBmSXpVQ5A | None | None | 1 | None | None | True | 2025-03-10 11:51:10.635000+00:00 | 1 | None | 1 |

| 1 | SofKDGEYF6PJ0000 | track.ipynb | Track notebooks, scripts & functions | notebook | None | None | None | None | 1 | None | None | True | 2025-03-10 11:51:03.390000+00:00 | 1 | None | 1 |

In a script¶

import argparse

import lamindb as ln

ln.Param(name="run_workflow_subset", dtype=bool).save()

@ln.tracked()

def subset_dataframe(

artifact: ln.Artifact,

subset_rows: int = 2,

subset_cols: int = 2,

run: ln.Run | None = None,

) -> ln.Artifact:

dataset = artifact.load(is_run_input=run)

new_data = dataset.iloc[:subset_rows, :subset_cols]

new_key = artifact.key.replace(".parquet", "_subsetted.parquet")

return ln.Artifact.from_df(new_data, key=new_key, run=run).save()

if __name__ == "__main__":

p = argparse.ArgumentParser()

p.add_argument("--subset", action="store_true")

args = p.parse_args()

params = {"run_workflow_subset": args.subset}

ln.track(params=params)

if args.subset:

df = ln.core.datasets.small_dataset1(otype="DataFrame")

artifact = ln.Artifact.from_df(df, key="my_analysis/dataset.parquet").save()

subsetted_artifact = subset_dataframe(artifact)

ln.finish()

!python scripts/run-workflow.py --subset

Show code cell output

→ connected lamindb: testuser1/test-track

→ created Transform('PrBojotEZdcL0000'), started new Run('OH7QI1Y7...') at 2025-03-10 11:51:18 UTC

→ params: run_workflow_subset=True

→ returning existing artifact with same hash: Artifact(uid='zJz1Jh9KjAcC69MG0000', is_latest=True, key='my_analysis/dataset.parquet', suffix='.parquet', kind='dataset', otype='DataFrame', size=6598, hash='N8O5K2TP4gL3fk42VYK5aA', n_observations=3, space_id=1, storage_id=1, run_id=1, created_by_id=1, created_at=2025-03-10 11:51:13 UTC); to track this artifact as an input, use: ln.Artifact.get()

→ returning existing artifact with same hash: Artifact(uid='RK9IUO0xw7Zbp8d40001', is_latest=True, key='my_analysis/dataset_subsetted.parquet', suffix='.parquet', kind='dataset', otype='DataFrame', size=3839, hash='4IcM2NblBj81aKsrT_8c1Q', n_observations=2, space_id=1, storage_id=1, run_id=4, created_by_id=1, created_at=2025-03-10 11:51:14 UTC); to track this artifact as an input, use: ln.Artifact.get()

→ returning existing artifact with same hash: Artifact(uid='hnfGbT94wnmuIoyl0000', is_latest=True, description='log streams of run e3pw2GIAh9LPbuqX9Ssb', suffix='.txt', size=0, hash='1B2M2Y8AsgTpgAmY7PhCfg', space_id=1, storage_id=1, created_by_id=1, created_at=2025-03-10 11:51:12 UTC); to track this artifact as an input, use: ln.Artifact.get()

! updated description from log streams of run e3pw2GIAh9LPbuqX9Ssb to log streams of run OH7QI1Y7I5XFo9qfomAp

→ finished Run('OH7QI1Y7') after 1s at 2025-03-10 11:51:19 UTC

ln.view()

Show code cell output

Artifact

| uid | key | description | suffix | kind | otype | size | hash | n_files | n_observations | _hash_type | _key_is_virtual | _overwrite_versions | space_id | storage_id | schema_id | version | is_latest | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||||||

| 6 | RK9IUO0xw7Zbp8d40001 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3839 | 4IcM2NblBj81aKsrT_8c1Q | None | 2.0 | md5 | True | False | 1 | 1 | None | None | True | 4 | 2025-03-10 11:51:14.221000+00:00 | 1 | None | 1 |

| 5 | RK9IUO0xw7Zbp8d40000 | my_analysis/dataset_subsetted.parquet | None | .parquet | dataset | DataFrame | 3233 | LiK5RaETbDxoDhxEn9X3Vw | None | 2.0 | md5 | True | False | 1 | 1 | None | None | False | 3 | 2025-03-10 11:51:13.642000+00:00 | 1 | None | 1 |

| 4 | zJz1Jh9KjAcC69MG0000 | my_analysis/dataset.parquet | None | .parquet | dataset | DataFrame | 6598 | N8O5K2TP4gL3fk42VYK5aA | None | 3.0 | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:13.569000+00:00 | 1 | None | 1 |

| 1 | 3ygfYgnlwhqI49dy0000 | my_file.fcs | None | .fcs | None | None | 19330507 | rCPvmZB19xs4zHZ7p_-Wrg | None | NaN | md5 | True | False | 1 | 1 | None | None | True | 1 | 2025-03-10 11:51:06.656000+00:00 | 1 | None | 1 |

Param

| name | dtype | is_type | _expect_many | space_id | type_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||

| 8 | run_workflow_subset | bool | None | False | 1 | None | NaN | 2025-03-10 11:51:18.283000+00:00 | 1 | None | 1 |

| 7 | output_artifact_key | str | None | False | 1 | None | 1.0 | 2025-03-10 11:51:13.520000+00:00 | 1 | None | 1 |

| 6 | input_artifact_key | str | None | False | 1 | None | 1.0 | 2025-03-10 11:51:13.514000+00:00 | 1 | None | 1 |

| 5 | subset_cols | int | None | False | 1 | None | 1.0 | 2025-03-10 11:51:13.507000+00:00 | 1 | None | 1 |

| 4 | subset_rows | int | None | False | 1 | None | 1.0 | 2025-03-10 11:51:13.501000+00:00 | 1 | None | 1 |

| 3 | preprocess_params | dict | None | False | 1 | None | 1.0 | 2025-03-10 11:51:06.821000+00:00 | 1 | None | 1 |

| 2 | learning_rate | float | None | False | 1 | None | 1.0 | 2025-03-10 11:51:06.815000+00:00 | 1 | None | 1 |

ParamValue

| value | hash | space_id | param_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|

| id | ||||||||

| 1 | ./mydataset | None | 1 | 1 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 2 | 0.01 | None | 1 | 2 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 3 | {'downsample': True, 'normalization': 'the_goo... | None | 1 | 3 | 2025-03-10 11:51:10.655000+00:00 | 1 | None | 1 |

| 4 | my_analysis/dataset.parquet | None | 1 | 6 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 5 | my_analysis/dataset_subsetted.parquet | None | 1 | 7 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 6 | 2 | None | 1 | 4 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

| 7 | 2 | None | 1 | 5 | 2025-03-10 11:51:13.616000+00:00 | 1 | None | 1 |

Project

| uid | name | is_type | abbr | url | start_date | end_date | _status_code | space_id | type_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||

| 1 | SqLhYL7wlywS | My project | False | None | None | None | None | 0 | 1 | None | None | 2025-03-10 11:51:02.758000+00:00 | 1 | None | 1 |

Run

| uid | name | started_at | finished_at | reference | reference_type | _is_consecutive | _status_code | space_id | transform_id | report_id | _logfile_id | environment_id | initiated_by_run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||||

| 1 | G38x79ywBD5xrym6viZg | None | 2025-03-10 11:51:03.401601+00:00 | NaT | None | None | None | 0 | 1 | 1 | NaN | None | NaN | NaN | 2025-03-10 11:51:03.402000+00:00 | 1 | None | 1 |

| 2 | e3pw2GIAh9LPbuqX9Ssb | None | 2025-03-10 11:51:10.638584+00:00 | 2025-03-10 11:51:12.129604+00:00 | None | None | True | 0 | 1 | 2 | 3.0 | None | 2.0 | NaN | 2025-03-10 11:51:10.639000+00:00 | 1 | None | 1 |

| 3 | KUBeT9tGm256XqCsiFmh | None | 2025-03-10 11:51:13.597486+00:00 | 2025-03-10 11:51:13.649124+00:00 | None | None | None | 0 | 1 | 3 | NaN | None | NaN | 1.0 | 2025-03-10 11:51:13.598000+00:00 | 1 | None | 1 |

| 4 | 0ub78nidDwB05BOgFRte | None | 2025-03-10 11:51:14.170468+00:00 | 2025-03-10 11:51:14.227337+00:00 | None | None | None | 0 | 1 | 3 | NaN | None | NaN | 1.0 | 2025-03-10 11:51:14.170000+00:00 | 1 | None | 1 |

| 5 | OH7QI1Y7I5XFo9qfomAp | None | 2025-03-10 11:51:18.298835+00:00 | 2025-03-10 11:51:19.859074+00:00 | None | None | True | 0 | 1 | 4 | 3.0 | None | 2.0 | NaN | 2025-03-10 11:51:18.299000+00:00 | 1 | None | 1 |

| 6 | 84YncDZzJ4DmvUPVZAF6 | None | 2025-03-10 11:51:19.807968+00:00 | 2025-03-10 11:51:19.854665+00:00 | None | None | None | 0 | 1 | 5 | NaN | None | NaN | 5.0 | 2025-03-10 11:51:19.808000+00:00 | 1 | None | 1 |

Storage

| uid | root | description | type | region | instance_uid | space_id | run_id | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||

| 1 | MICS1FRqVOxt | /home/runner/work/lamindb/lamindb/docs/test-track | None | local | None | 73KPGC58ahU9 | 1 | None | 2025-03-10 11:50:58.301000+00:00 | 1 | None | 1 |

Transform

| uid | key | description | type | source_code | hash | reference | reference_type | space_id | _template_id | version | is_latest | created_at | created_by_id | _aux | _branch_code | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||||

| 5 | e7FUrbehBr9F0000 | run-workflow.py/subset_dataframe.py | None | function | @ln.tracked()\ndef subset_dataframe(\n arti... | Dqbr_hMfHs17EhbPXP_PyQ | None | None | 1 | None | None | True | 2025-03-10 11:51:19.806000+00:00 | 1 | None | 1 |

| 4 | PrBojotEZdcL0000 | run-workflow.py | run-workflow.py | script | import argparse\nimport lamindb as ln\n\nln.Pa... | yqr8j5hTUulVRzv4J-o9SQ | None | None | 1 | None | None | True | 2025-03-10 11:51:18.295000+00:00 | 1 | None | 1 |

| 3 | sHWJJ5P8vZQc0000 | track.ipynb/subset_dataframe.py | None | function | @ln.tracked()\ndef subset_dataframe(\n inpu... | F_wwrfFs6zmzMGVilG2Prg | None | None | 1 | None | None | True | 2025-03-10 11:51:13.591000+00:00 | 1 | None | 1 |

| 2 | aosEGfdSQlyn0000 | run-track-with-params.py | run-track-with-params.py | script | import argparse\nimport lamindb as ln\n\nif __... | nRUs3ZjuVTbKtBmSXpVQ5A | None | None | 1 | None | None | True | 2025-03-10 11:51:10.635000+00:00 | 1 | None | 1 |

| 1 | SofKDGEYF6PJ0000 | track.ipynb | Track notebooks, scripts & functions | notebook | None | None | None | None | 1 | None | None | True | 2025-03-10 11:51:03.390000+00:00 | 1 | None | 1 |

Sync scripts with git¶

To sync with your git commit, add the following line to your script:

ln.settings.sync_git_repo = <YOUR-GIT-REPO-URL>

import lamindb as ln

ln.settings.sync_git_repo = "https://github.com/..."

ln.track()

# your code

ln.finish()

You’ll now see the GitHub emoji clickable on the hub.

Manage notebook templates¶

A notebook acts like a template upon using lamin load to load it. Consider you run:

lamin load https://lamin.ai/account/instance/transform/Akd7gx7Y9oVO0000

Upon running the returned notebook, you’ll automatically create a new version and be able to browse it via the version dropdown on the UI.

Additionally, you can:

label using

ULabel, e.g.,transform.ulabels.add(template_label)tag with an indicative

versionstring, e.g.,transform.version = "T1"; transform.save()

Saving a notebook as an artifact

Sometimes you might want to save a notebook as an artifact. This is how you can do it:

lamin save template1.ipynb --key templates/template1.ipynb --description "Template for analysis type 1" --registry artifact

Show code cell content

assert my_project.artifacts.exists()

assert my_project.transforms.exists()

assert my_project.runs.exists()

# clean up test instance

!rm -r ./test-track

!lamin delete --force test-track

• deleting instance testuser1/test-track